-

-

-

-

CindyB

You can also get an electrolyte panel... https://ownyourlabs.com/test/77efb80e-2b60-4866-9823-886b3889fc87?back=%2Fshop It's also worth noting that nighttime cramps may have other causes that are unrelated to electrolytes. You can start with the blood test, and you might see where you can add or back off on certain electrolytes. But it's things look fine, we night have to keep digging.

-

-

-

-

-

3 Year Carniversary! - Ask Me Anything!

🎉 My 3-Year Carniversary on Carnivore! 🎉 Ask Me Anything Live Three full years of a mostly carnivore-centric, meat-based diet. Let's celebrate together. Join us LIVE on screen with your own device. In this special AMA episode of Carnivore Talk, I’m opening the floor to YOUR questions. Whether you’re just starting out, dealing with adaptation struggles, curious about long-term results, or want the real talk on energy, sleep, mental clarity, skin, digestion, or anything else — now’s your chance. Drop your questions in the live chat as we go — no topic is off limits! WATCH: https://www.youtube.com/live/Ld2vr_daOg4?si=Vjw0F069SEzRtev8

-

Bob started following 3 Year Carniversary! - Ask Me Anything!

-

-

Does refeeding syndrome even occur on keto/carnivore?

You greatly reduce your risk of issues by avoiding the carbohydrates, especially if you have been keto-carnivore for some time and are already fat adapted. Protein and fat stimulated for less insulin and will also result in less overall electrolyte shifts. In cases of severe starvation it could still be an issue. The recommendation is to start reintroducing food slowly. Start with someone small and mild like bone broth, and egg, etc. Watch for symptoms. Then add more food gradually.

-

Bob started following 2-year anniversary , Does refeeding syndrome even occur on keto/carnivore? , I'mmmmmm BAAAAAAAAAAAAAAAAAACK and 1 other

- I'mmmmmm BAAAAAAAAAAAAAAAAAACK

-

-

-

-

-

2-year anniversary

You have to know your audience. Some people can appreciate the reminder. "Oh yeah, you're right. I did feel so much better on carnivore". And then for others, pointing out their flaws offends them. In which case pointing our your successes and reasons might make them think deeper without turning attention to them directly.

-

-

-

-

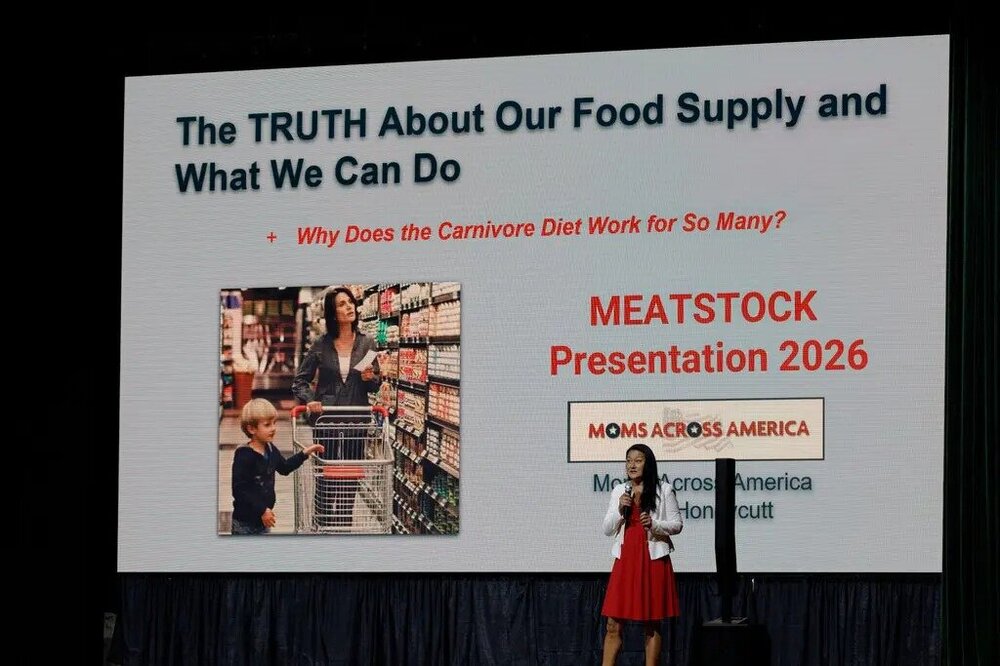

Inside a Carnivore Convention Where Meat Is Considered Medicine

Next year it moves to Nashville Tennessee. I think I might try to attend it myself.

-

-

-

-

What Did You Eat Today?

Some friends invited us over for dinner yesterday. Their daughter is also carnivore (whom I regularly coach/converse with) so they knew how to accommodate me. They had nachos with taco fixin's, but for me had bought a bag of high quality pork rinds. I topped them off with the seasoned beef, chicken, sour cream, and cheese. It was delicous and I was able to "fit right in" and crunch along with everyone else, lol.

-

Inside a Carnivore Convention Where Meat Is Considered Medicine

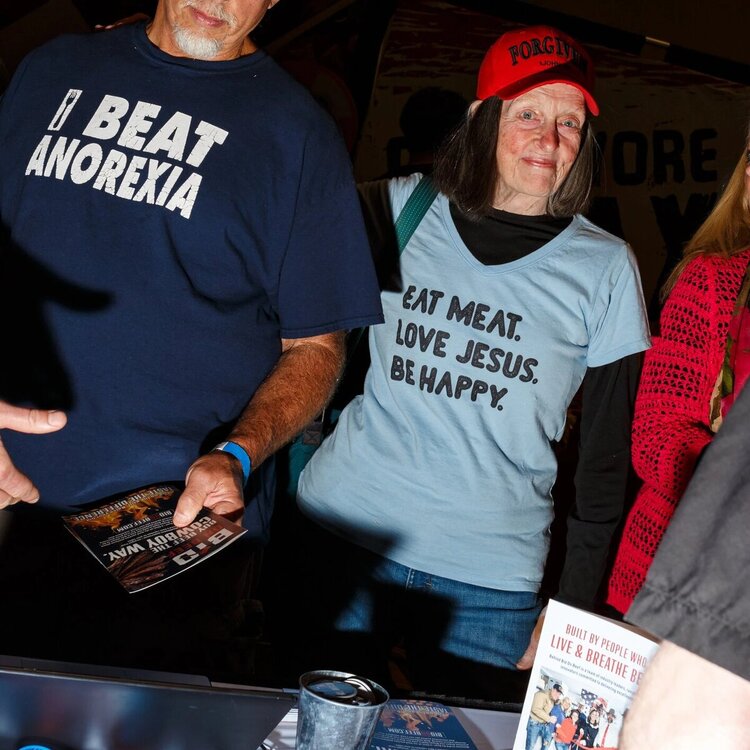

Inside a Carnivore Convention Where Meat Is Considered MedicineDevotees of the diet, which Health Secretary Robert F. Kennedy, Jr. follows, bonded over brisket and butter at Meatstock. By Dani Blum Photographs by Juan Diego Reyes for The New York Times Reporting from Gatlinburg, Tenn. May 5, 2026 Lisa Moss roamed the halls of Meatstock with a butter keychain dangling off her bag and a pin on her jean jacket that read: “I <3 steak.” She carried a bag of air-dried steak with her, just in case she needed a snack. Ms. Moss, 57, and her husband, Phil Moss, had flown from Alberta, Canada, to Meatstock, the three-day carnivore convention in Gatlinburg, Tenn. They were among more than 1,400 attendees who came to see their superstars — influencers who went by handles like “Steak and Butter Gal” and “2 Krazy Ketos” — and to meet other like-minded people who follow a carnivore diet of primarily or solely animal products, often forgoing fruits and vegetables entirely. “I’ve had people say that to me — ‘Don’t you want to just be normal?’” Serena Musick, a carnivore influencer, said during a panel on carnivore cooking tips. “If being normal means that you can’t exercise, and being normal means you can’t stand up without your knees or back hurting, then I don’t want to be normal,” she added. Talking to one another over brisket dipped in butter and cups of raw milk, they shared what they called their “testimonies,” describing how they believed the diet had healed a wide array of ailments, including arthritis, mental illness and diabetes. One attendee carried a pair of jean shorts that were nearly twice as wide as his waist, to show off the weight he’d lost since “going carnivore.” Most doctors would disagree with the attendees’ enthusiastic claims about the diet’s benefits. They have urged people to eat less red meat, warning that consuming too much raises cholesterol and increases the risk for heart disease. And they have stressed that fruits and vegetables are essential to prevent chronic illnesses. Those perspectives are of little interest to many at Meatstock. After shunning traditional diet advice, they have gone on to lose faith in conventional medicine and health guidance more broadly. It’s not just a diet, they said — it’s a mind-set. “It’s rethinking, relearning what we’ve all been taught,” said Ms. Moss, who adopted the carnivore diet seven years ago and wore a hat bearing the word “Tinfoil.” Standing for the national anthem. Zen Honeycutt, founder of Moms Across America. Followers of the carnivore diet remain a niche community, but their worldview is gaining more legitimacy. Health Secretary Robert F. Kennedy, Jr. has said he follows a carnivore diet, which he has claimed could eliminate dangerous body fat. When Mr. Kennedy unveiled a new food pyramid this year, steak earned a top spot. Several of his prominent allies spoke at the convention, including Calley Means, his close adviser; Zen Honeycutt, founder of the advocacy group Moms Across America; Vani Hari, a food activist known as “The Food Babe”; and Alex Clark, the host of a popular wellness podcast. While many of the attendees said they weren’t particularly interested in politics, their statements often echoed the rhetoric of Mr. Kennedy’s “Make America Healthy Again” movement. They were eager to trade prescription drugs for added helpings of beef and painted mainstream medicine as trying to profit off patients. Attendees crammed into conference rooms for presentations on raw meat and food addiction, as well as a seminar that questioned whether high cholesterol could actually lead to heart disease. (It can.) In the exhibit hall, women in bonnets sold raw cheese and butter, advertised as “for cats and dogs” to skirt restrictions on selling those products to humans. People drifted between booths selling meat-centric items like tallow lotions and a cereal made of ground beef. Non-edible offerings included a holistic health class for home-schooled teens and a tool to block radiation from cell phones. Veronica Eggleston, 24, said that she had become increasingly attentive to what she puts into or on her body since she adopted the carnivore diet in high school. She replaced her traditional sunscreen with a tallow-based product, for example. Ms. Eggleston, who attended the conference with her mother, said that one of the hardest parts about adhering to the diet was the pushback she received from friends and co-workers. “It’s so nice to not feel weird, to be in a space where I’m not constantly getting questions or personal attacks,” said Ms. Eggleston. Many of the attendees also said they were there to find community. Some even walked around with cutting boards that they asked others to sign, like high school yearbooks. “There just seems to be such a camaraderie here,” said Karen Chandler, 65. “That’s felt really good for me, because I’ve been kind of out there by myself.” Ms. Chandler was sitting next to Christy Desautels, 59, whom she had befriended on the bus from the airport. They two were now talking over lunch — plain burger patties heaped high on silver trays. Attendees wore shirts with a variety of slogans, such as “Real Women Eat Meat” and “Eat Meat and Question Everything.” Another attendee, Adi Lavi, 34, seemed concerned with matters beyond friendship: She walked around the exhibit hall, wearing a bag that said “Ask me about carnivore dating.” She had become a carnivore while in a relationship with someone who “believes in conventional medicine,” she said. That divide was one of the main reasons they broke up. Now she was starting a matchmaking service, just for carnivores. Dani Blum is a health reporter for The Times. ARTICLE SOURCE: https://www.nytimes.com/2026/05/05/well/meatstock-carnivore-diet-rfk-jr.html

-

-

-

2-year anniversary

Awesome! And happy Carniversary! It just dawned on me that my 3 year carniversary was May 12th. And @Geezy is May 9th. Fun that we are all May meaties :)

-

Progress over Perfection

until

Tired of quitting carnivore because you “messed up” one meal, couldn’t afford grass-fed, or had a slice of cake at a birthday? This episode is your permission slip to stop chasing perfection and start focusing on consistent progress — the real secret to long-term success. In 2026, the carnivore community is embracing realistic, sustainable eating over rigid rules. Learn how “imperfect carnivore” still delivers massive wins in energy, fat loss, mental clarity, and health transformations. Whether you’re just starting, coming back after a break, or deep into your journey, this episode will help you stay in the game and actually enjoy it. WATCH: https://www.youtube.com/watch?v=DYLPzUPlZ9c -

-

-

Post a picture... Any picture

- Funny Memes

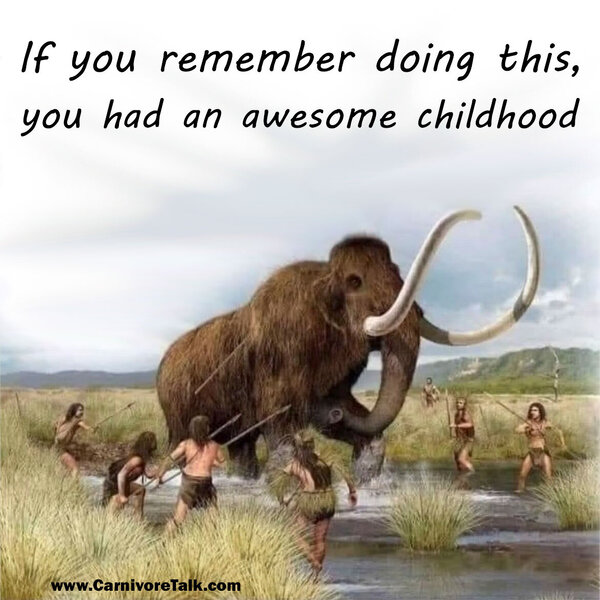

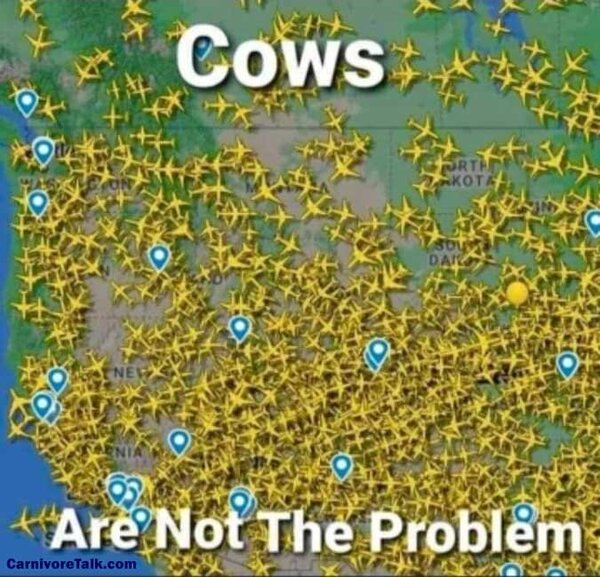

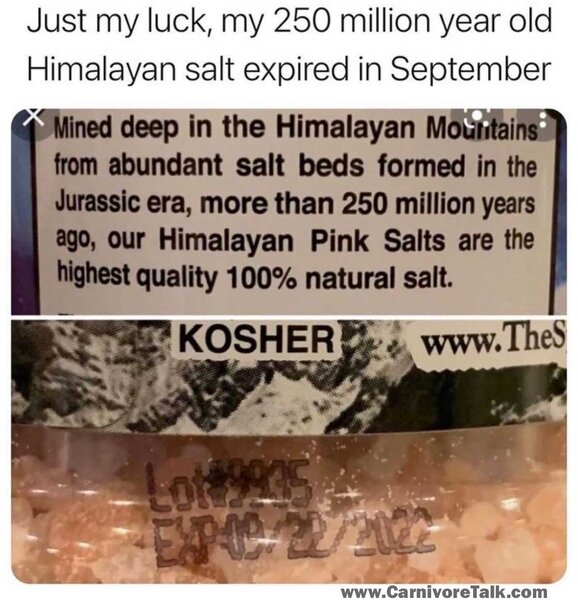

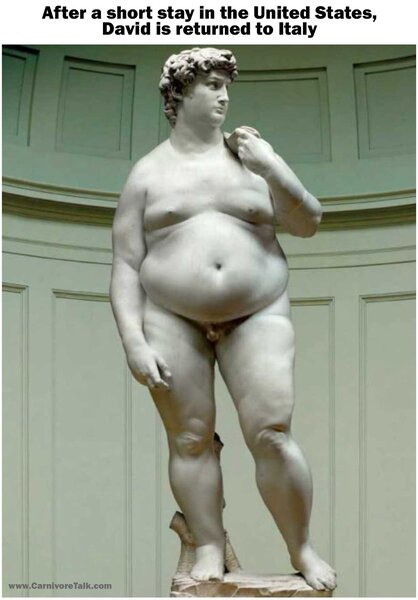

Just general memes. Some of them may be health related but didn't really fit into the Keto or Carnivore categories all that well.- Did Humans Evolve To Eat Meat?

Cain no doubt toiled to produce his offering. God accepted grain offerings later on in the law of Moses, and God makes no mention of the manner in which the offering was made. But some other verses help draw a clearer picture.... The implication here is that Cain lacked faith. Now compare this thought with this verse.... So it would seem that God did not look with any favor upon Cain himself, and not just his offering. As a loving father, God even tried to correct Cain.... The language here implies that Cain was not regularly doing good, but rather regularly doing bad deeds. So it runs much deeper than his offering of fruits and vegetables.- Just started carnivore

With the parents' permission you should make that a publicly visible short. Now I want to film babies trying steak, lol- Eat More & Crave Less with a Carnivore Diet

until

Tired of being hungry on carnivore even after big meals? In this episode, we reveal why most people still battle cravings and how to finally eat more, crave less — the real way. Discover the power of true satiety: learning when to stop eating, why fatty meat is your best friend, and how to rewire your hunger signals so food noise disappears. No calorie counting, no grazing, just simple, sustainable carnivore eating that delivers steady energy, effortless fat loss, and real satisfaction. Drop your biggest craving struggle in the comments — I’ll reply to as many as possible! WATCH: https://www.youtube.com/watch?v=302JucJFpNY - Funny Memes

Welcome to Carnivore Talk!

Our Carnivore Forum is a community of friends focused on an animal-based ketogenic lifestyle. Become a member today for FREE and gain the knowledge and expertise you need to take control of your own health. We look forward to supporting you on your personal health journey.

Why Keto & Carnivore?

We believe that a proper, all natural human diet should be meat-based, whether that's a keto, ketovore, or carnivore lifestyle. You will thrive on most nutrient dense foods on the planet, lose weight, and possibly reverse disease and chronic illnesses, so why not give this a try?

Important Information

We have placed cookies on your device to help make this website better. You can adjust your cookie settings, otherwise we'll assume you're okay to continue.